OpenAI has released GPT-5.3-Codex, describing it as its most capable agentic coding model so far. The headline number is straightforward: it runs about 25% faster. But the bigger story is that Codex is expanding beyond writing code and beginning to look like a general-purpose software agent.

The Codex team said GPT-5.3-Codex even helped debug and deploy parts of itself during development. That detail may sound small, but it signals a shift in how these systems are built and maintained.

Here’s what actually changed — and why it matters.

From Code Assistant to General Software Agent

Earlier versions of Codex focused mainly on writing and reviewing code. GPT-5.3-Codex is positioned differently. OpenAI says it can now support work across the entire software lifecycle, including debugging, deployment, monitoring, writing product requirement documents, editing copy, running tests, analyzing metrics, and even building slide decks or spreadsheets.

That reflects how software teams actually work. Developers rarely spend their entire day writing code. They review pull requests, fix deployment issues, respond to incidents, document decisions, and coordinate with product and design teams. A tool that supports only coding solves part of the problem. A tool that understands the broader workflow begins to change how teams operate.

OpenAI also says the model can be steered mid-task without losing context. In practice, this means you can interrupt a long-running process, adjust instructions, and continue without starting over.

25% Faster and Built for Longer Tasks

Performance improvements are another focus. GPT-5.3-Codex is reported to run 25% faster and handle longer-running tasks more effectively. Developers who use agentic coding tools know the pattern: you assign a task, wait, and switch to something else while it runs.

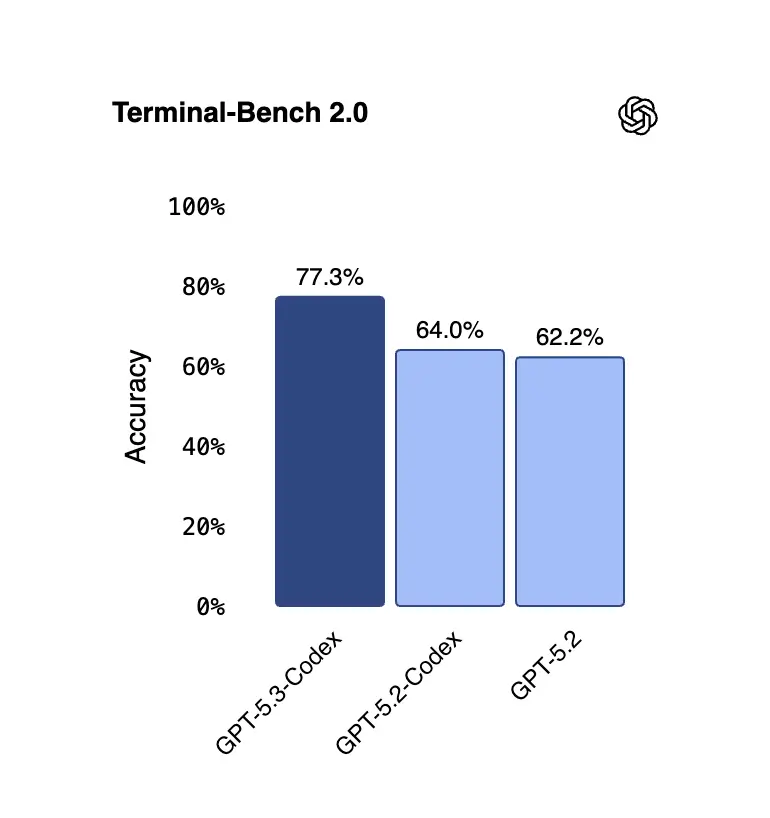

OpenAI claims new highs on SWE-Bench Pro and Terminal Bench, along with strong performance on OSWorld and GDPVal. These benchmarks measure coding ability and agent-style operations. While benchmarks never tell the full story, they do provide a signal that the model is improving on structured engineering tasks.

OpenAI also says assignments require fewer tokens, increasing efficiency. In large-scale deployments, token efficiency directly affects cost and speed. For teams integrating Codex into daily workflows, that translates into practical value.

Better Handling of Underspecified Prompts

One of the more interesting updates is how GPT-5.3-Codex handles vague instructions. OpenAI says “underspecified” prompts now produce richer and more usable results.

In earlier systems, vague prompts often led to shallow outputs. Now, if a user asks for a basic website, the model defaults to adding sensible structure and functionality instead of returning a minimal placeholder.

For product teams and developers, this lowers the barrier between idea and prototype. You can start with rough instructions and refine from there, instead of crafting highly detailed prompts upfront. It shifts the interaction from precise command writing to collaborative iteration.

Codex Helped Build Itself

Perhaps the most striking claim is that GPT-5.3-Codex was instrumental in its own development. The Codex team used earlier versions to debug training processes, manage deployment workflows, and diagnose test results.

This introduces a feedback loop in which the system contributes to improving itself. It does not mean the model independently designed its successor. Humans still guide architecture, training decisions, and evaluation. But it does mean the model participated in the engineering process.

As these loops tighten, development cycles may shorten. AI tools that assist in debugging and deployment reduce friction for the teams building them. The better the model becomes, the more useful it is in refining the next version.

Cybersecurity and Safeguards

OpenAI classifies GPT-5.3-Codex as “high capability” for cybersecurity tasks under its Preparedness Framework. The model has been trained to identify software vulnerabilities and includes expanded safeguards and monitoring.

Alongside the release, OpenAI announced Trusted Access for Cyber and a $10 million API credit program to support cybersecurity research. The company says it is applying a precautionary approach, including dual-use safety training and automated monitoring.

This reflects a broader tension. As coding agents become more capable, they can be used both to secure and exploit systems. Training models to detect vulnerabilities is useful for defense, but it also raises questions about access control and oversight. Capability growth and safety measures now move together.

Competitive Context

The release of GPT-5.3-Codex comes at a moment of increased competition. Anthropic announced Opus 4.6 at the same time, and Claude Code has built a strong presence among developers.

OpenAI also recently introduced a dedicated Mac app for Codex, signaling that it views this product line as more than an experimental feature. The version number is notable as well. The main GPT line currently sits at 5.2, yet Codex has moved to 5.3. While nothing has been formally announced, it suggests broader updates may follow.

The coding agent space is quickly becoming central to how AI companies differentiate themselves. Performance, speed, autonomy, and safety are now competitive variables.

What This Means for Teams

GPT-5.3-Codex points toward a model where developers spend less time on repetitive execution and more time directing systems. If agents can handle debugging, deployment, documentation, and extended tasks, the human role shifts toward supervision, architectural decisions, and final judgment.

That does not remove engineers from the loop. It changes the loop.

Teams adopting these tools will need to rethink workflows. Long-running agents require monitoring. Mid-task steering requires clarity about goals. Efficiency gains depend on integration with existing systems — not just model capability.

The more meaningful question is not whether GPT-5.3-Codex writes better code. It is whether organizations are ready to adapt to agents that operate across projects rather than inside single prompts.

Final Thoughts

GPT-5.3-Codex shows where software development is heading. Agents are getting faster, more autonomous, and more involved in the systems that create them. The real question is not whether these tools will improve, but whether teams will adapt their workflows to match their capabilities.

Are you structuring your engineering process around AI agents — or still using them as side tools?

What is GPT-5.3-Codex?

GPT-5.3-Codex is OpenAI’s latest agentic coding model designed to support the full software development lifecycle, not just code generation.

How is GPT-5.3-Codex different from earlier Codex versions?

Unlike earlier versions focused mainly on writing code, GPT-5.3-Codex supports debugging, deployment, documentation, monitoring, and extended agent-style tasks.

Is GPT-5.3-Codex really 25% faster?

OpenAI reports that GPT-5.3-Codex runs approximately 25% faster and requires fewer tokens per assignment, improving efficiency and cost performance.

Can GPT-5.3-Codex handle long-running development tasks?

Yes. It is optimized for longer, agent-style workflows and can be steered mid-task without losing context.

Did GPT-5.3-Codex help build itself?

According to OpenAI, earlier Codex versions were used during development to assist with debugging, deployment workflows, and testing processes.

Is GPT-5.3-Codex safe for cybersecurity use?

OpenAI classifies it as high capability for cybersecurity tasks and has implemented safeguards, monitoring, and controlled access programs.